HRTF spatial upsampling in the spherical harmonics domain employing a generative adversarial network

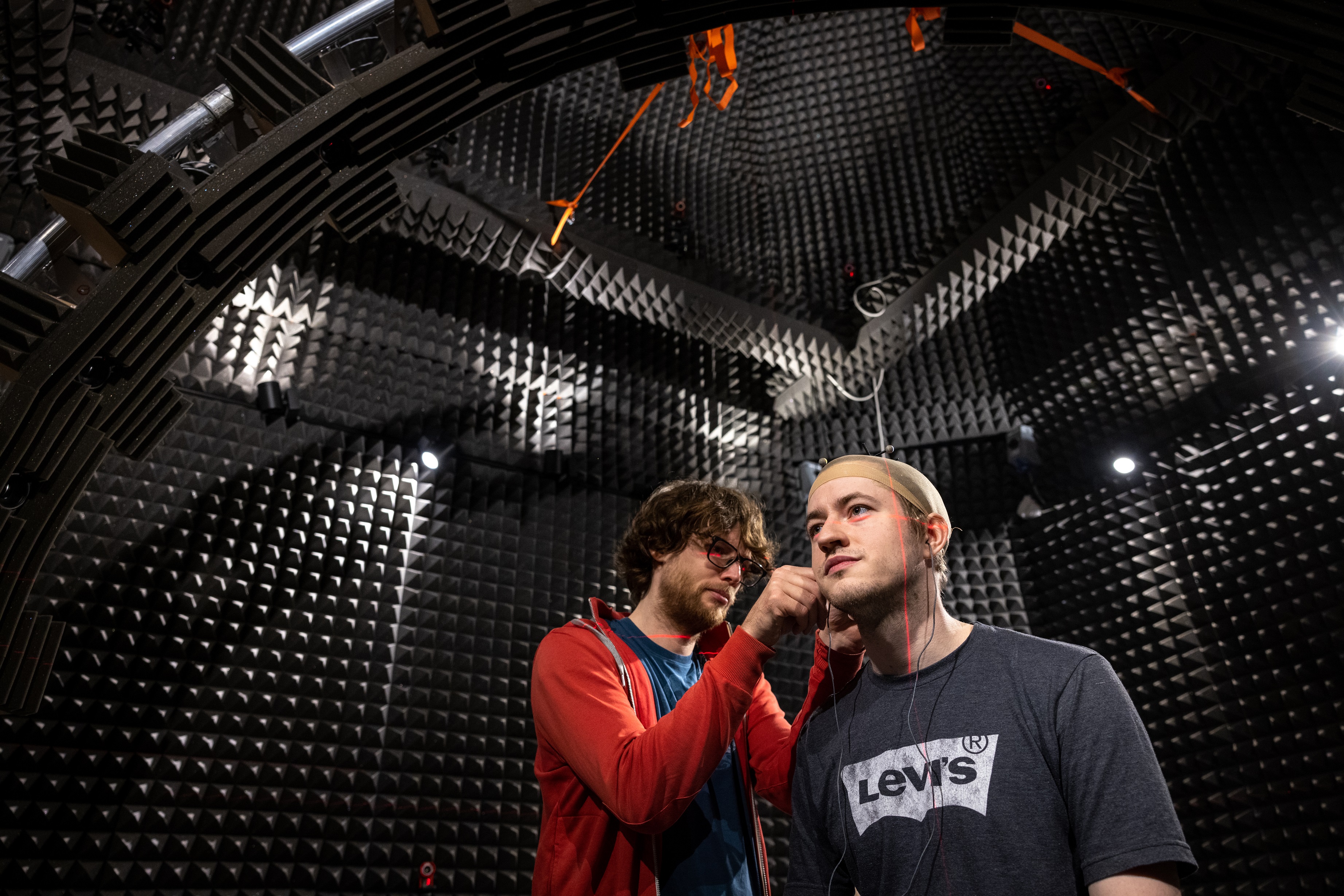

A Head-Related Transfer Function (HRTF) is able to capture alterations a sound wave undergoes from its source before it reaches the entrances of a listener's left and right ear canals, and is imperative for creating immersive experiences in virtual and augmented reality (VR/AR). Nevertheless, creating personalized HRTFs demands sophisticated equipment and is hindered by time-consuming data acquisition processes. To counteract these challenges, various techniques for HRTF interpolation and up-sampling have been proposed. This paper illustrates how Generative Adversarial Networks (GANs) can be applied to HRTF data upsampling in the spherical harmonics domain. We propose using Autoencoding Generative Adversarial Networks (AE-GAN) to upsample low-degree spherical harmonics coefficients and get a more accurate representation of the full HRTF set. The proposed method is bench-marked against two baselines: barycentric interpolation and HRTF selection. Results from log-spectral distortion (LSD) evaluation suggest that the proposed AE-GAN has significant potential for upsampling very sparse HRTFs, achieving 17% improvement over baseline methods.

Visit PublicationAuthors from the Audio Experience Design Team

Related Project

Transforming auditory-based social interaction and communication in AR/VR